Josh MaCoy and Michael Mateas. 2008. An Integrated Agent for Playing Real-Time Strategy Games by . In Proceedings of the 23rd national conference on Artificial intelligence.

The paper presents a real time strategy (RTS) AI agent that integrates multiple specialist components to play a complete game. The idea is to partition the problem space into domains of competence seen in expert human players, and use the expert domain knowledge of human players in each domain to play a complete game.

Introduction

RTS games provide a rich and challenging domain for autonomous agent research. In games like Warcraft and Starcraft the player has to build up armies to defeat the enemy, while defending his own base. In RTS games one has to make a real-time decisions that directly or indirectly affects the environment which impose a complexity making it a big challenge for an AI agent to play an RTS game.

RTS game contain a large number of unique objects and actions.Domain objects include units, buildings with different capabilities and attributes, researches and upgrades for these units and buildings, resources that should be gathered. Domain actions include unit and building construction, what kind of research to do for each unit and building, resource management, utilize units capabilities during battle.

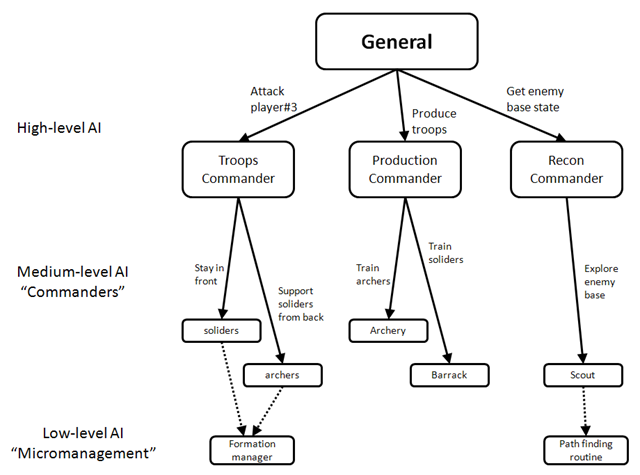

Actions in RTS games occur at multiple levels:

- High level strategic decisions: which type of unit and building to produce, which enemy to attack.

- Intermediate (Medium) level tactical decisions: how to deploy a group of units across the map.

- Low–level micromanagement decisions: individual units actions.

The combination of these 3 levels of decisions made it hard to use game-tree search based technique that has been proven successful for games like chess. To illustrate the complexity, A typical RTS player must engage in a multiple, simultaneous, real-time tasks in the middle of the game, a player may be holding an attack on enemy base, while researching his army, and in the same time take care of resource management, and it is not strange to find him defending his base that is being attacked from the back. To make it more complex, the RTS game environment incorporate incomplete information (i.e semi-observable environment) through the use of “fog of war” which hides the most of the map, this requires the player to repeatedly send scouts across the map to know the current state of the enemy.

This attributes of the RTS domain requires an agent architecture that incorporate human-level decision making about multiple simultaneous tasks at multiple levels of abstraction and combine reasoning with real-time activity.

The SORTS agent is an agent capable of playing a complete RTS game, include the use of high level strategy. While SORTS is an impressive agent, there are improvements to be added. The agent developed in this paper adds the use of reactive planning language capable of more tightly coordinating asynchronous unit actions in unit micromanagement tasks, decomposes the agent into more distinct module and incorporate expert human knowledge.

Related Work

Current research in RTS AI agent tends to focus on either the low-level micromanagement or the high-level strategy leaving the tactics and the micromanagement to the individual units built-in AI. The high-level strategy and micromanagement are two important for RTS play, the failure to build integrated agent that is able to combine all the AI decision levels in RTS has resulted in an agent able to play a game not in a competitive level to human player.

A number of researchers focused on applying a single algorithm on a single perspective of the game; Monte Carlo planning for micro-management, Plan Domain Definition Language (PDDL) to explore the tactical decision involved in building orders, Relational Marcov Decision Process (MDP) to generalize strategic plans. All of these made a local improvements, but never integrated in a single agent to play a complete game. Also there were Evolutionary learning on tactical decisions, Case-based reasoning over human traces make it possible for the agent to play a complete game. However these methods were implemented as a single component concerned with the high-level strategy, limited tactics and leaving the micromanagement to the individual unit built-in AI.

The SORTS in an agent capable of playing a complete RTS game, incorporating high-level strategy. Unit micro-management is handled in using FSMs. To enable a larger amount of tactical coordination, the military and resource FSMs are coordinated by a global coordinators. There is a simple learning used in this global coordinators to enhance the performance of the agent.

While the SORTS agent is impressive, capable of playing a complete game integrating multiple modules, there are a number of improvements to be made. The agent proposed in the paper adds the use of reactive planning language capable of coordinating asynchronous unit actions in unit micromanagement.

Expert RTS Play

Expert RTS players and the RTS community has developed a standard strategies, tactics, and micro-management. In chess game, part of the expert play is to choose techniques at a multiple levels of abstractions in response to recognized opponent strategy, tactics and micro-management, and then improvising with these techniques. However the RTS play far exceeds chess play in complexity.

We will find general rules of thumb in RTS play, The “behind on economy” strategy which as I think is about producing troops based on your current economy, the more resources you have the more troops you can train, however this strategy guarantees a loss when tried verses expert player. A rule of thumb can be violated based on the situation (e.g available resources in the map, distance between player and enemy, etc…). As an example, the “Probe Stop” strategy is about halting economic expansion in favor of putting all available income in military production, which results in a temporary spark in military strength, this strategy if used unwisely will result in a complete loss if produced troops died early.

When we talk about high-level strategy, we will find that player has to develop and deploy strategies which coordinate the style of the economic build-up, the base layout, offensive an defensive style. A well known strategy in “Warcraft 2” is “Knight`s rush”, the knight is a heavy unit in the middle of the game tech-tree, the player focus on making the minimum necessary upgrades and buildings to produce the knights, and as soon as they are available a fast rush is to be performed to take out enemy. This strategy is about a tradeoff between an early defense and a later offensive power, the reason behind this is that in early game the player has no defensive structures or units.

Player decides his high-level strategy early at the beginning of the game based on some information such as map size, number of opponents and resources state in the map. However, player must be ready to switch his strategy based on the new information gathered through map scouting.

When we talk about medium-level tactics, we will find ourselves talking about deployment and grouping decisions. Unlike micromanagement, tactics involves coordinating a groups of units to do a specific task. On common knowledge found in “Warcraft3” is coordinating units to block enemy retreat using area effect attacks or block terrain bottlenecks using units (e.g stand on a bridge that allows a few units to pass at a time). Tactical decisions requires the knowledge of common tactics and their counter-tactics.

When we talk about low level micro-management, we will find that expert human players has developed a micro-management techniques applicable to nearly all RTS games. “Dancing” technique is about a specific use of ranged units in which a group of ranged units hold a ranged attack simultaneously, then “dancing” back during their “cooldown” (i.e the time needed by a unit after each attack to perform a new attack). This dancing allows the weak ranged units to stay away from the melee battle area during cooldown. We call “dancing” a micro-management technique because it involved the detailed control of the individual unit moves. When a micro-management is absent the units will receive their orders in response to the high-level directives as “Attack” using their simple built-in behaviors (e.g path finding, obstacle avoidance, etc …).

In RTS game, the map is partially revealed using “fog of war”, this requires from the player to send scouts across the map to find enemy base position, and know the nature of his economic build-up (this reveals the strategy the enemy is likely to follow) and knowing the physical layout of the base (whether the base is heavily defended).

Framework

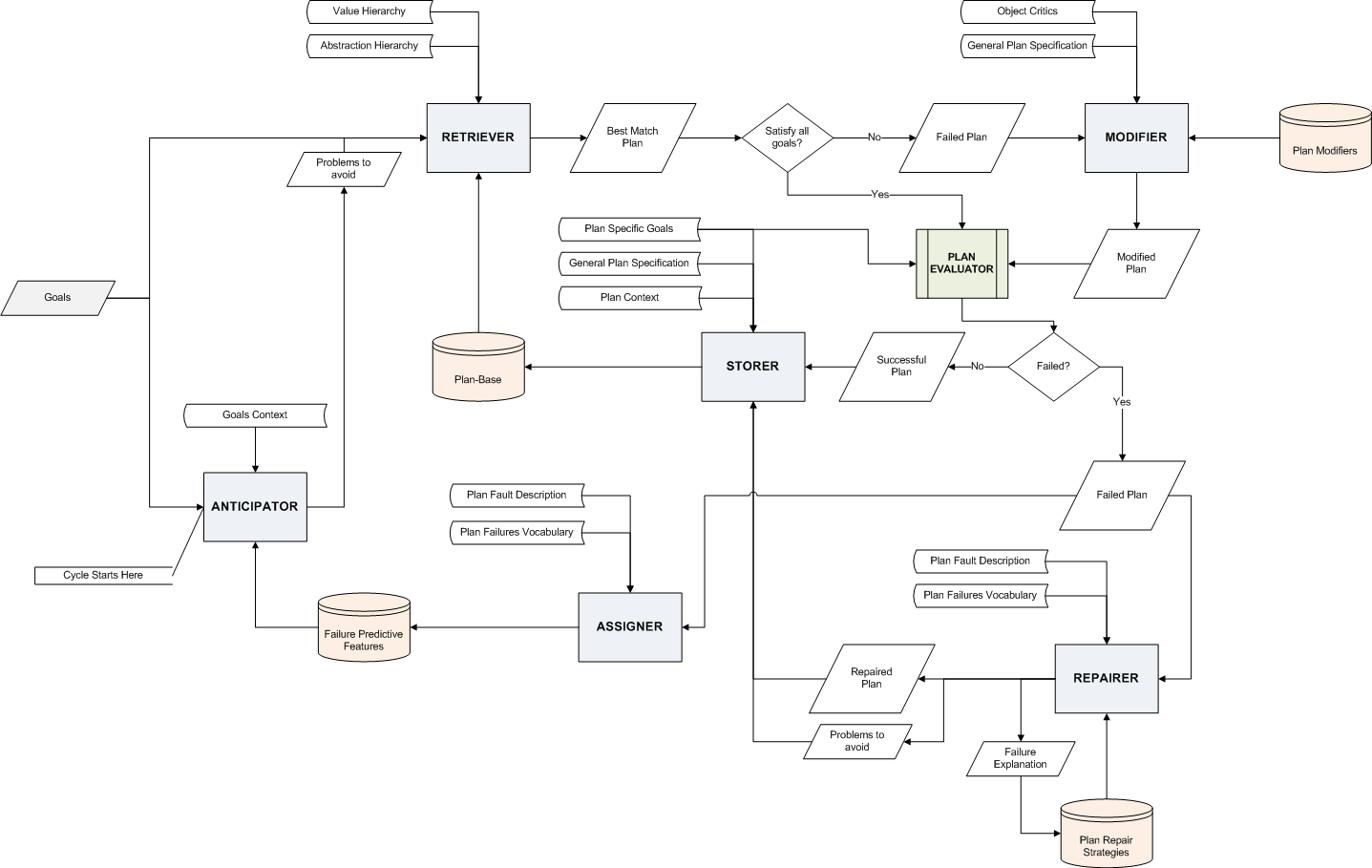

The software framework of the developed agent consists of the ABL(A Behavior Language), a reactive planning language connected to the Wargus RTS engine.

Agent Architecture

The agent is composed of distinct managers each of which is responsible for one or more of the major tasks mentioned in the Expert RTS Play section. The agent consist of strategy, production, income, tactical and resource manager.

The factoring the agent based on the expert play tasks, it is easier to modify individual managers and measure the effect of each manager on the overall performance when increasing or decreasing the competence of each manager.

Strategy Manager

The strategy manager is responsible for high-level strategic decisions. The first task is to determine the proper initial order in which to construct buildings and units.

The InitialStrategy module utilize the recon manager to know the distance to the enemy base, this distance determines is used to choose the proper strategy. If the enemy base is close then a rush attack strategy is applicable in which 1 barrack is built and some units are produced without building a second farm. This gives the agent the advantage to defend against early enemy attacks, and also has the potential to make an early attack (aka rush attack). If the enemy base is far then there is time to make a robust economy and produce huge military before engaging in a battle.

The TierStrategy module has the highest priority recurring task in the strategy manager. At each level of the three tiers in Wargus, TierStrategy responsibility include: maintaining a unit cap control with regards to the production capacity, constructing units and buildings superior to that of the opponent, and attacking when the agent has military unit advantage.

TierStrategy starts making decisions after the initial building order controller by the InitialStrategy is complete. A primary responsibility for the TierStrategy is to determine which kind of building or unit to produce during the game past the initial build order. TierStrategy is also responsible for determining when to attack given the number of military units controlled by the agent vs the opponent.

Income Manager

The income manager is responsible for the details of controlling workers who gather resources, releasing workers for construction and repair tasks, and maintaining wood to gold ration set by the strategy manager.

Production Manager

The production manager is responsible for constructing units and buildings, It has modules that serve 3 priority queues: units construction priority queue, buildings construction priority queue, a queue for repeated cycles of unit and buildings construction.

The production manager should also apply what is called “resource locking”, which is about subtracting the required building resources virtually from the current physical resource, that is because there is a time passed between the time of taking the construction decision and the time the worker reach his destination to start building.

Tactics Manager

The tactics manager takes care of unit tasks pertaining to multi-unit military conflicts. There are three modules, the first module assigns military units to groups, the second module keep military units on task by making sure they don’t go off the course, the third module removes slain units from military units groups.

The tactics manager provides an interface for the high-level control of the military groups to be sued by the strategy manager. All basic military unit commands are made available to the strategy manager (e.g: move, attack, stand group, patrol, etc…), also more abstract commands are available (e.g: attack enemy base).

Recon Manager

The recon manager is responsible to provide the other managers with aggregate information (e.g number of workers and military units the opponent has). The current academic and commercial RTS AI make the assumption of perfect information (i.e ignoring the “fog of war”) which makes the environment fully observable. The developed agent removed this perfect information assumption to allow the recon manager to hold reconnaissance task (e.g send scouts across the map to gather information).

Managers Interdependency

This section describes the relation between the managers, and how the individual managers competencies are integrated to play a complete game. Next the paper talked about the effect of the removal of a certain manager from the system. The results were logical and can be deduced using the rule of thumb.

Results

This section shows that the integrated agent performed well against two scripted agents: Solider`s rush and Knight`s rush. Each script was tested on a different map size, medium and large. The agent played 15 game for each combination between a map and a scripted opponent.

The many of the losses suffered by the developed agent where due to the lack of sophistication in the tactics manager. Specifically, the tactics manager fails to concentrate military units in an area in either offensive or defensive situations. When many parallel decision are made else where in the agent, small delays are introduced when sending commands to units. causing units to trickle towards engagement and be easily defeated. A future work is to be done in the units formation management.