Agenda:

• What we’ve done?

• How we’ve do it?

• What you can do?

• What’s next?

What we’ve done

Developed an agent that’s capable of:

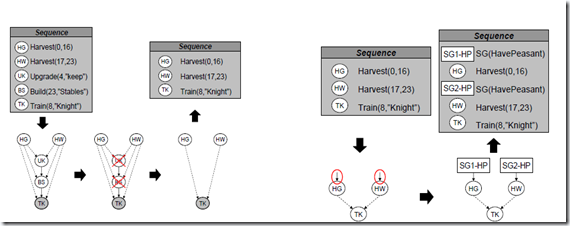

1. Learn from human demonstration.

2. Put a plan online and adapt it online.

3. Assets the current situation and react based on it.

4. Learning from its failure.

5. Encapsulate its learnt knowledge in a portable casebase.

How we’ve done it

1. Reading about general game AI:

a. AI Game Engine Programming.

b. Artificial intelligence for games.

c. Programming game AI by example.

2. Reading about latest research in RTS AI:

a. All papers reside in project repository in “Adaptation and Opponent Modeling” folder.

3. Reading about machine planning:

a. Machine planning papers resides in project repository under folder “CBR/CBP”.

4. Reading about machine learning:

a. Reinforcement Learning: An Introduction.

5. Understanding Stratagus code:

a. Open the code and enjoy.

Minimal requirement is:

1. Reading Santi’s papers.

2. Reading about machine learning.

3. Understanding Stratagus code.

What you can

1. Enhance current engine (60% Theory, 40% Code):

a. Human demonstration feature:

i. Adding parallel plan extraction.

ii. Adjusting attack learning method.

b. Planning feature:

i. Adding parallel plan execution.

c. Situation Assessment:

i. Converting it from static rules into generated decision trees.

d. Learning:

i. Needs intensive testing and tuning for parameters.

2. Modularize the engine (20% Theory, 80% Code):

a. Make the middle layer generic for any RTS games.

b. Let the configuration of middle layer scripted (or whatever but should be something external).

c. Modularize used algorithms. So we can use it any context suitable.

d. Develop the engine in API form.

3. Knowledge Visualize (N/A):

a. Develop tool to visualize agent’s knowledge, summarizes how it will react while playing in the game. This tool will let us investigate agent’s knowledge deeply.

4. Tactics Planning and Learning:

5. Propose other approaches for planning and learning.

6. Parallelized AI:

a. Some processing in the engine is done sequentially were it may be done in parallel. Using a distributed/parallel API (i.e. OpenCL) to parallelize the agent’s processing.

What’s next?

Tasks are divided as follows:

1. Muhamad Hesham will read about General Game AI.

2. Magdy Medhat will read about latest research in RTS Games AI.

3. Mohamed Abdelmonem will read about machine planning.

4. Islam Farid will read about machine learning (especially Reinforcement Learning).

Also we’ve agreed about the follows:

1. We’ll first enhance the engine (i.e. develop feature #1) while we are reading and building our knowledge.

2. Then, we’ll start developing the learning and planning in tactics level.

How we’ll enhance the engine developmed?

Every member of the team will be involved in the development of specific feature with either Abdelrahman or Omar. This will be done besides his readings. For now Magdy Medhat will in the first feature.

Final note, we’ll post tasks on the blog and you can track the results there.

Expert interaction is added to the environment.

Expert interaction is added to the environment.

Game state will come from the world directly.

Game state will come from the world directly.

Wargus is referred as environment.

Wargus is referred as environment.

Replace are Cases phrase with Case phrase.

Replace are Cases phrase with Case phrase.